- Let's Connect!

- Email: support@securityboat.net

- Phone: +91 9175154999

HTTP request smuggling is old but very interesting vulnerability. In 2019 it was reborn by James Kettle. And from that time, HTTP request smuggling is gaining huge popularity among the security researchers in cyber world. Http request smuggling can lead to bypassing internal security controls, and this can further lead to gaining access to protected resources like the admin console and many more. But before getting into details we should first know the basics.

What is HTTP?

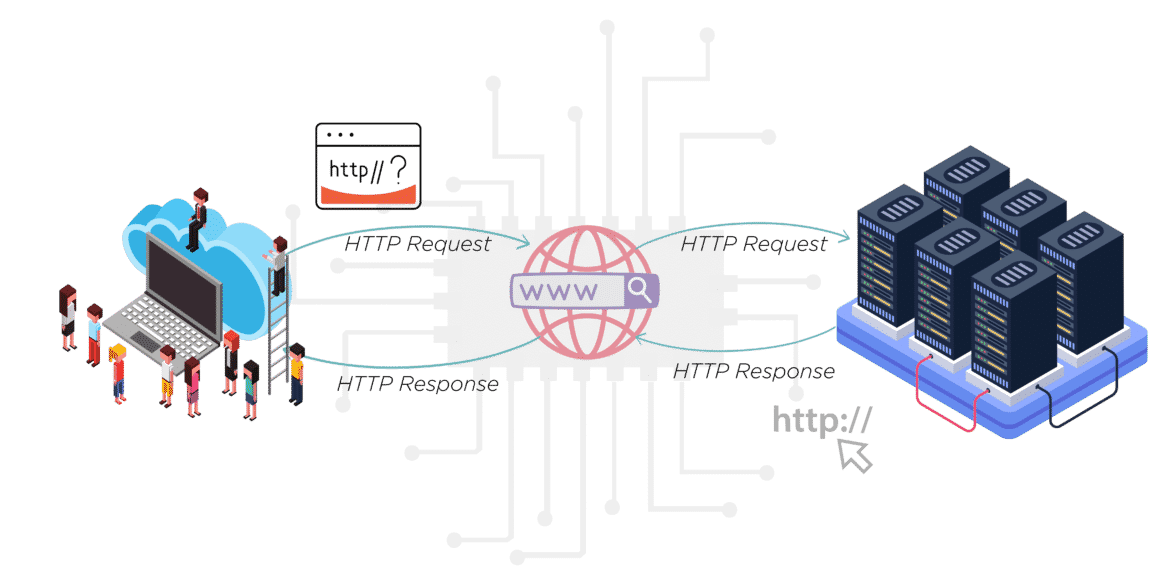

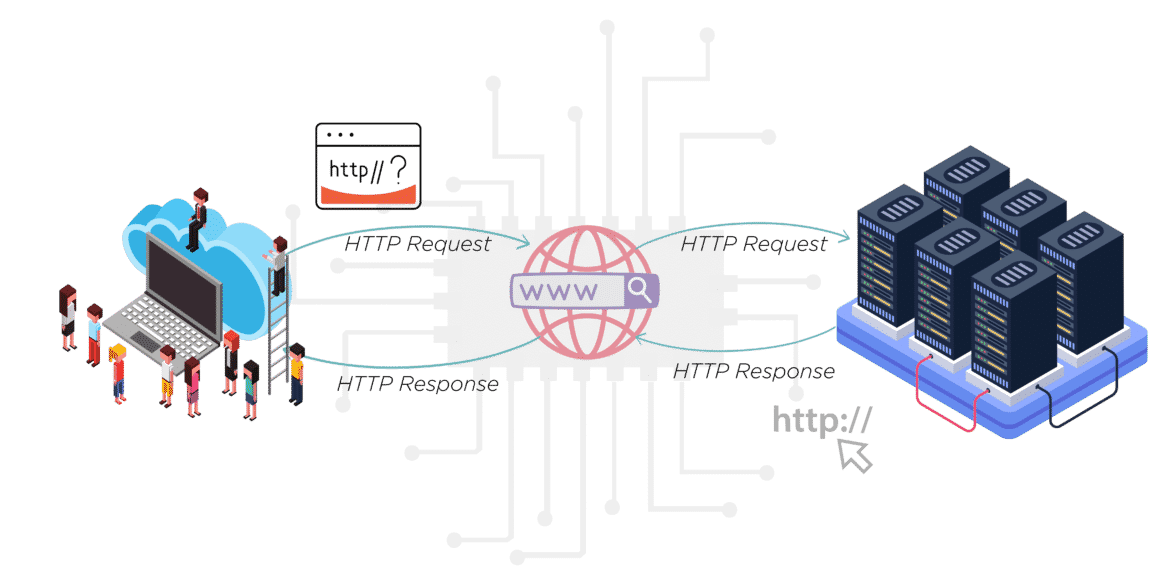

HTTP is a protocol that allows fetching resources, such as HTML documents. It is the foundation of any data exchange on the Web, and it is a client-server protocol. Your server will receive requests from the browser that follows HTTP. It then responds with an HTTP response that all browsers are able to parse.

Components of HTTP-based systems

Each request is sent to a server, which handles it and provides an answer, called the response. Between the client and the server, there are numerous entities, collectively called proxies, which perform different operations and act as gateways or caches, for example.

Client :

The browser always initiates requests. The server never initiates messages (though some mechanisms have been added to simulate server-initiated messages over the years). When presenting a Web page, a browser sends a request to fetch the HTML document that represents the page.

Server :

The server is on the other end of the communication channel, serving the document to the client. In reality, a server can be a collection of computers sharing the load (load balancing) or a complex piece of software interrogating other computers (such as a cache, a database server, or an e-commerce server), generating the document entirely or partially on demand.

Proxies:

Numerous computers and machines relay HTTP messages between the Web browser and the server. As a result of the layered structure of the Web stack, most of these operate at the transport, network, or physical levels, becoming transparent at the HTTP layer and potentially affecting performance. A proxy operates at the application layer. These can be transparent, in which case they forward the requests without altering them in any way, or non-transparent, in which case they alter the request in some way before passing it along to the server. Proxies are often used for the following purposes:

HTTP Messages

Browsers communicate using HTTP. Each time you load a web page, you send an HTTP request to the site’s server, and the server responds with an HTTP response.

Requests

Once your server receives the request, it will perform some processing and respond. A response header looks like this:

The server’s response consists of two sections, the headers and the body. The headers contain all the response’s metadata. Content length (how large my response is) and type of content are included. A status code is also included in the headers. A response’s body is what you see on the page. Everything you see is HTML and CSS. A response’s body contains the majority of its data, not its headers.

URI

How do you know where to send a request you make on the web? Uniform Resource Identifiers or URIs, are used to accomplish this. You may also know them as URLs. Either way is fine. Take a look at the URI we used up top.

https://securityboat.net/about

This URI is broken into three parts:

Our protocol is the way we send our requests. Different types of protocols are used on the Internet.

A domain name is a string of characters used to identify the unique location of the web server hosting that particular website. Examples include netflix.com and demonslayer.com.

We want to load a particular part of the website. securityboat.net has millions and millions of channels and videos, so the specific resource we want is /about

HTTP Requests

GET /search?q=test HTTP/2

User-Agent: curl/7.54.0

Accept: */*

In the above request, “GET” is the HTTP method specifying what the request generally wants.

The following are some common HTTP methods and what they are intended for:

Status Codes

Whenever there is a successful response (you’ll know because the page will load without any errors), it’s a status code of 200, but there are also other status codes, and you should become acquainted with them. 404 is the second most popular status code. Status codes are categorised based on their first digit. These are the different categories:

Now let’s move to client and server architecture.

Client Server Architecture:

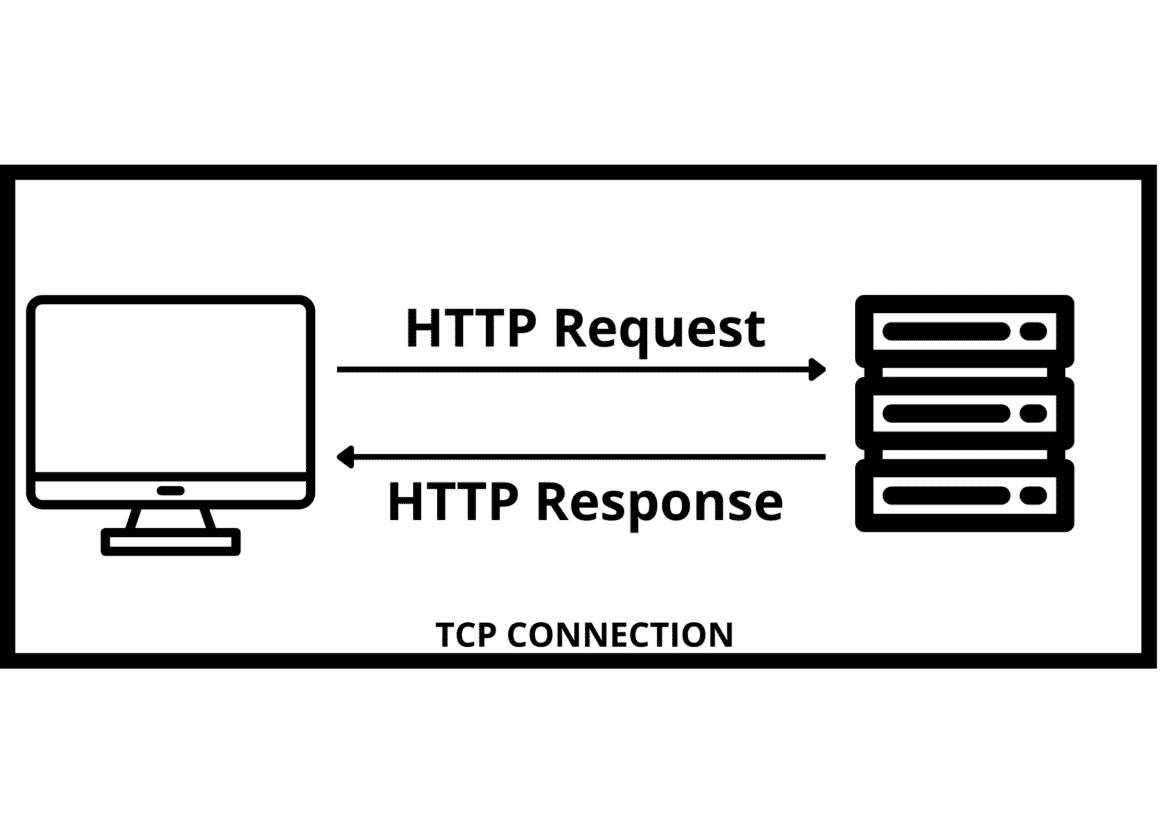

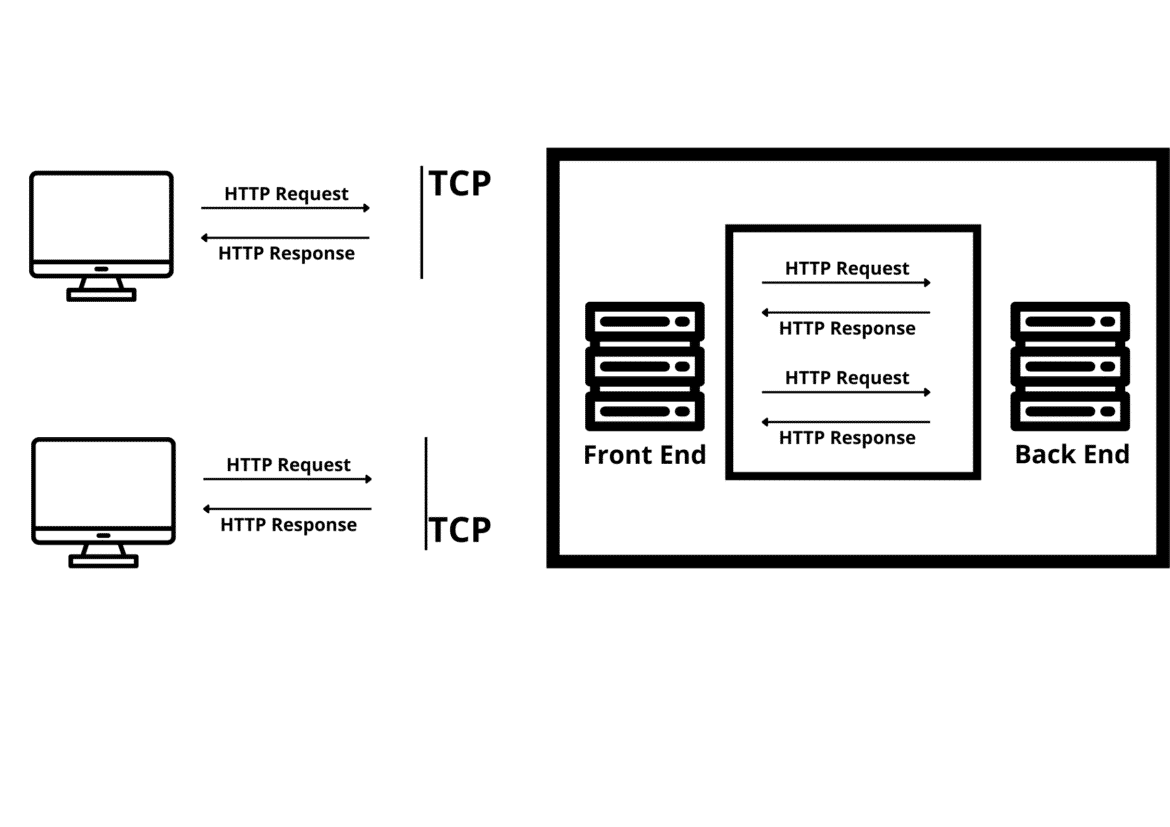

So, In Basic client and server architecture, a TCP connection is established at first, followed by TLS. Next, we send HTTP requests and get an http response from the server. But this is about old client-server architecture. The new and real-world architecture is multi-tier architecture.

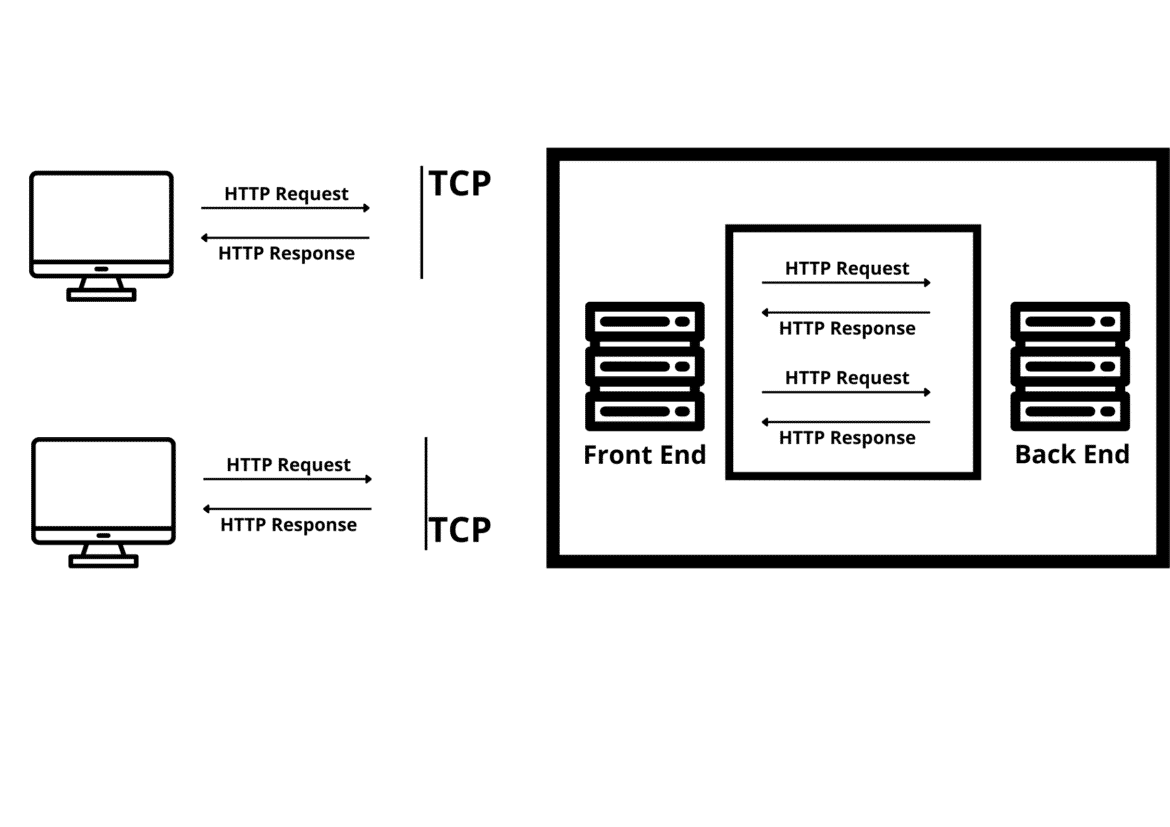

Multi-Tiered Client Server Architecture:

In multi-tiered architecture, there are two servers: the front and back. The front-end servers are usually reverse proxies or load balancers. So, what is a reverse proxy server? So, basically, a reverse proxy server is a proxy server that resides behind a firewall and directs clients’ requests to the appropriate backend server. It also protects the web server’s identity. And load balancer distributes incoming network traffic across different backend servers.

New TCP and TLC connections also establish in the front-end and back-end servers for interchanging requests.

Why is TCP used with HTTP?

TCP is a transport layer protocol, and HTTP is an application layer protocol. Because HTTP is inherently unreliable, it cannot handle data loss, whereas TCP can. Thus, HTTP will rely on TCP whenever a reliable HTTP connection needs to be made. Is that to say you need a separate TCP connection for every HTTP request? Well, that’s how it all started.

HTTP/1.0, by default, opens a TCP connection for every HTTP request that is made.

You can manually alter this behaviour by including Connection: keep-alive in the request. This will allow the TCP connection to remain open as well as HTTP requests to be sent one after another.

A TCP connection is persistent in HTTP/1.1, and multiple HTTP requests can be sent under the same connection.

Additionally, the Connection: close header can be used to close the connection. Now, it’s a very interesting part.

How do servers distinguish requests?

The GET request has a URL and headers. The URL has an HTTP/version number that indicates the end. The server understands the standard headers and values, and the ones it does not understand, it simply rejects. Thus, the end of headers indicates the end of a GET request. It doesn’t seem like getting requests is a big problem, do they?

What about POST requests? POST bodies vary from application to application and framework to framework. So to differentiate, two headers are there.

Content-Length (CL):

Content-Length specifies the length of the body of the message in bytes

With this header, the content length of the POST BODY is calculated, including the CRLF (\r\n) characters.

Transfer-Encoding (TE):

Transfer-Encoding headers can be used to specify the chunked encoding for message bodies. Chunked encoding means that data chunks are embedded within the message body. Each chunk contains the chunk size in bytes (expressed in hexadecimal), followed by a newline and the chunk contents. The message ends with a chunk of size zero.

How does HTTP request Smuggling Attack Happen?

Now that we know, Real-Time Multi-Tier Client Server Architecture can send multiple HTTP requests over the same TCP/IP connection. A request is distinguished by its header, CL, and TE.

It is necessary to ensure that front-end and back-end systems can distinguish between these requests efficiently; otherwise, an attacker may send a malicious request that gets handled differently by front-end and back-end systems.

Depending on the circumstances, a request can use both methods to specify where it ends. According to the HTTP specification, the Content-Length header should be ignored if both Transfer-Encoding and Content-Length headers are present. When multiple servers are in use, however, this can be insufficient! Now the thing is,

There are some servers that do not support Transfer-Encoding headers.

There are some servers that support the Transfer-Encoding header.

Therefore, if front-end and back-end servers disagree on this differentiation of request, ambiguity results.

The attacker might then create a request that uses CL and TE headers in such a way that both the front end and the back end process the request differently. Therefore, depending on this, various scenarios can be defined:

(CL, TE) – Front end uses CL and backend uses TE

(TE, CL) – Front End server uses TE and backend CL

(TE, TE) – Front End server and Back End server both accept TE, but one of the servers fails to process it

Do you really need to send two headers in a single request to make this work? Of course not! Sometimes this doesn’t work. Doesn’t that sound intriguing? We have to send both headers, but in a way that one is missed by the front-end server. James Kettle’s research revealed multiple payloads on the Transfer-Encoding header. The Front-End server would not process the header when it is sent.

Now let’s see some attack scenarios in detail:

CL.TE :

POST / HTTP/1.1

Host: vulnerable-website.com

Content-Length: 13

Transfer-Encoding: chunked 0 SMUGGLED

Front-end servers use the Content-Length header, while back-end servers use the Transfer-Encoding header. This is how we can perform a simple HTTP request smuggling attack

The front-end server determines that the request body is 13 bytes long, including SMUGGLED, based on the Content-Length header. The request is forwarded to the back-end server.

As a result of processing the Transfer-Encoding header, the back-end server interprets the message body as chunked encoding. Once it processes the first chunk, which is stated as being zero in length, the request is terminated. In this case, SMUGGLED bytes are not processed, so the back-end server interprets them as the start of a request.

TE.CL

POST / HTTP/1.1

Host: vulnerable-website.com

Content-Length: 3

Transfer-Encoding: chunked

8

SMUGGLED

0

The front-end server treats the message body as chunked by processing the Transfer-Encoding header. Until the start of the line following SMUGGLED, it processes the first chunk, which is 8 bytes long. The request is terminated after processing the second chunk, which is stated to be zero length. The request is forwarded to the back-end server.

In response to the Content-Length header, the back-end server determines that the request body is 3 bytes long up to the next line after 8. The back-end server will treat these bytes, starting with SMUGGLED, as the beginning of the next request in the sequence.

TE.TE

Both the front-end server and the back-end server support the Transfer-Encoding header, however the front-end server does not have to process it by obfuscating it.

There are countless ways to obfuscate Transfer-Encoding headers. For example :

Transfer-Encoding: xchunked

Transfer-Encoding : chunked

Transfer-Encoding: chunked

Transfer-Encoding: x

Transfer-Encoding:[tab]chunked

[space]Transfer-Encoding: chunked

X: X[\n]Transfer-Encoding: chunked

Transfer-Encoding

: chunked

TE.TE vulnerabilities can be discovered by modifying the Transfer-Encoding header so that one of the front-end or back-end servers processes it, and the other ignores it.

Depending on whether the front-end or back-end server does not process the obfuscated Transfer-Encoding header, the attack will take the same form as the TE.CL and CL.TE vulnerabilities described above.

So, this is two part blog. We will be updating part 2 link in which we will be seeing identification and exploitation of http request smuggling vulnerability.